- Home

- About

- Contact

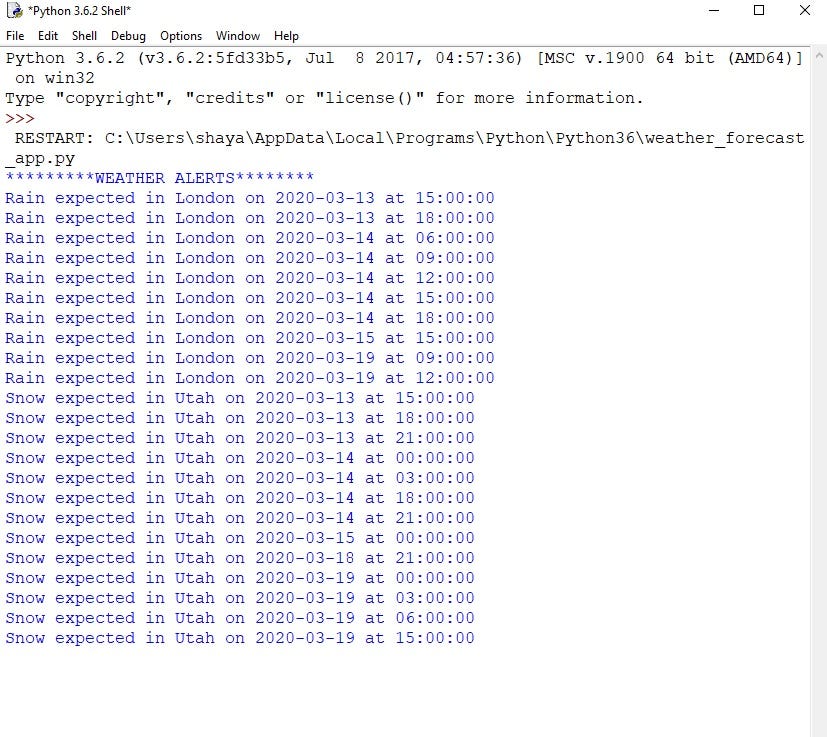

- Easy weather python code

- Id y evangelizar a los bautizados pepe prado pdf

- Bharat movie online putlocker

- Masterchef the professionals episode 10

- Autocad 2009 for sale

- What is siemens teamcenter

- Who did clapton write you look wonderful tonight song about

- Xiaopan 0-4-7-2 tutorial

- 3ds max 2016 bible

- Fusion 360 vs autocad

- Phantasmagoria game for pc

- Daz studio genesis 3 morphs to genesi 8

- Billy elliot full movie

- Quick step reclaime review

- Martin mpc 128 universes

- Nidhogg 2 xbox one

- Best app like audacity

- Sniper ghost warrior 1 gameplay

- Is unreal engine 4 free

- How to do an arched wall in profantasy

- Artcam 2015 download free

Why should this bother us? Well, we have no idea of the variance! Unfortunately, this functionality is not easily available in Python, and to get it we would need to employ the much heavier machinery of Markov Chain Monte Carlo, Stochastic Variational inference or some other general inference algorithm.Īn excellent example of a Python library providing such features is Numpyro. these are the single most likely values for the parameters. Hmmlearn uses the Baum-Welch algorithm under the hood to fit these parameters, which means that this is only a maximum likelihood estimate: i.e.

#Easy weather python code full

In order to get our inferred transition values, all we need to do is inspect our stateful HMM object! ansmat_ array(,Īs we can see, this is an excellent fit! Despite emission probabilities being highly confounding, we have managed to recover almost the exact transition matrix! The full posterior: MCMC with numpyro model.fit(sequences.cpu().numpy().reshape(-1, 1).astype(int), lengths.cpu().numpy().astype(int)) Critique the model Importantly, since we will remove the dimension of the data that tells us which sequence is which, we also need to tell hmmlearn the lengths of each sequence in the now one dimensional data. Now to perform inference! To satisfy hmmlearn’s api, we need to reshape the data a bit first. Now, all that is left to do is fill in the known emission matrix: model.emissionprob_ = emission.cpu().numpy().astype(float) Then we only want to perform inference on the starting states and transition matrix, hence we give hmmlearn the arguments: params="st" Now, this is where things get a little more complicated: let us consider that the emission matrix for our system was known. Model = hmm.MultinomialHMM(n_components=3, n_iter=10000, params="st", init_params="st") Next up is to define our mode in terms of hmmlearn! For us, matching discrete hidden states to discrete observations means we need a Multinomial model. Lengths = torch.Tensor(np.array()).to(torch.int) Inference Observation = pyro.sample("y_".format(j, k), Transition = torch.Tensor(,ĭist.Categorical(torch.Tensor()), Here we will use pyro to generate our random samples.

#Easy weather python code install

We can install this simply in our Python environment with: conda install -c conda-forge hmmlearnįirst of all, let’s generate a simple toy dataset by specifying the generating process for our Hidden Markov model and sampling from it. The easiest Python interface to hidden markov models is the hmmlearn module. In that example, one or neither of the two matrices might be known: in particular we may have a strong prior belief about how often the sensor is wrong from conducting experiments, hence we might know the emission matrix, but not the transition matrix.

#Easy weather python code series

The most immediately obvious application for these models might be something like the toy example with the weather: a noisy measurements of some series in space or time.

Now, the weather *is* cloudy or clear, we could go and see which it was, so there is a “true” state, but we only have noisy observations on which to attempt to infer it. Consider a sensor which tells you whether it is cloudy or clear, but is wrong with some probability.